CUDA

Several mini-projects are available for learning about GPGPU technologies, primarily CUDA.

Realized as multiple mini-projects during the CUDA course, it aims to provide students with a comprehensive overview of various algorithms that exist in massively parallel programming. Additionally, it addresses the challenges that may arise when accessing data with thousands of cores simultaneously. Programming with SIMD technologies can be challenging, but this course greatly aided my understanding of the fundamentals and assisted in initiating my B.Sc. final project.

CUDA, OpenMP, C++, Boost

The Project

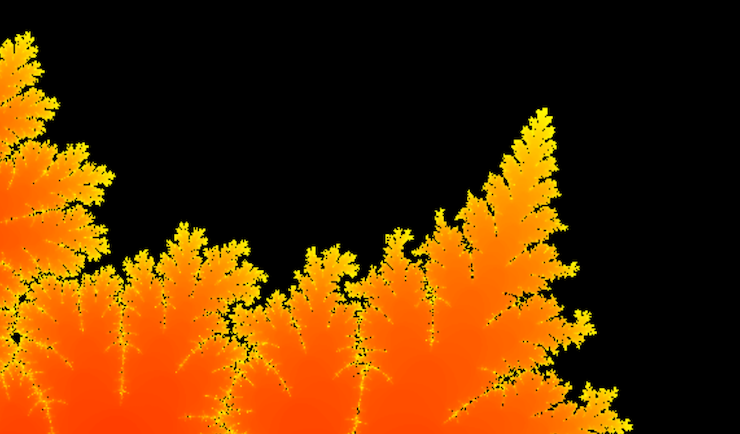

Fractals and simple mathematics applications

We explored basic mathematical algorithms for generating fractals, specifically Mandelbrot, Julia, and Newton sets. These implementations not only helped us discover CUDA, the working environment for the course but also facilitated our understanding of the main principles of SIMD programming.

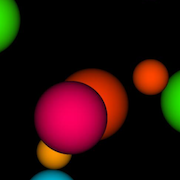

Simulating OpenGL with GPGPU computations

OpenGL is an excellent library for harnessing the power of GPUs when rendering 3D scenes. Here, we have attempted to create a simplified version of OpenGL specifically for rendering scenes comprised solely of mathematically defined perfect spheres, utilizing ray-tracing technology (without ray re-emission). This approach results in a straightforward OpenGL Phong rendering.

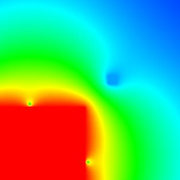

Advanced research projects on performance drawbacks and optimization

Despite CUDA being a powerful technology, misusing it can lead to very poor performance, sometimes falling below the initial 'sequential' performance obtained on a standard processor. In the final part of the course, we were encouraged to conduct advanced research in a specific application of our choice, aiming to understand its efficiency limitations. The goal was to experiment with various configurations until we found the optimal one.

Miscellaneous

| Type | Course project |

| Degree | B.Sc. HE-Arc, 3rd year |

| Course | CUDA Programming |

| Duration | ~60 hours |

| Supervisor | Prof. Cédric Bilat |